Federal AI Law Won’t Save You. The States Already Moved.

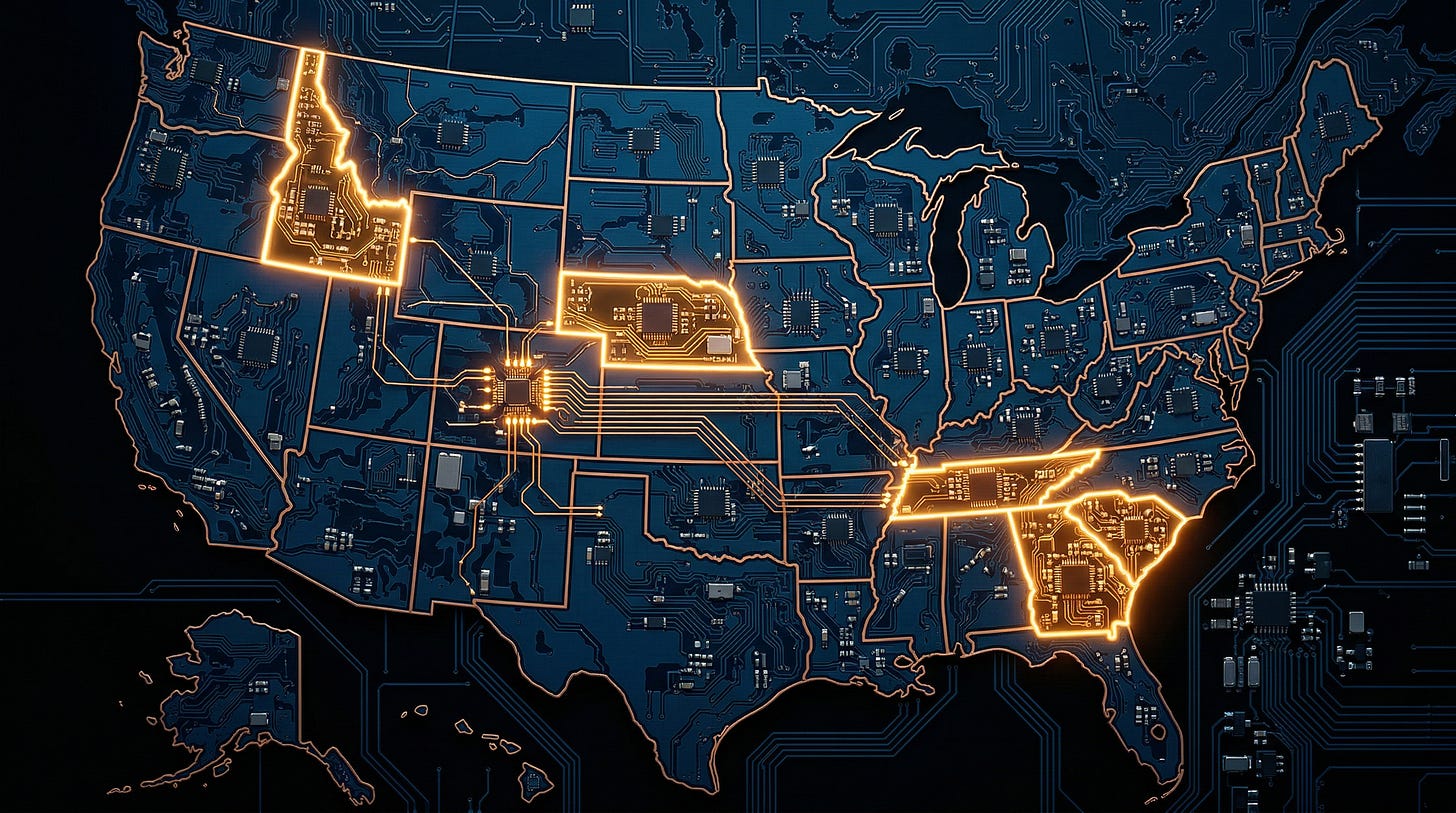

Five states, five sets of rules, zero alignment. The patchwork everyone warned about just showed up.

The State AI Regulation Wave Is Here, and Your Clients Aren’t Ready

TL;DR: Five states advanced AI legislation in a single week, covering mental health chatbots, insurance AI, child safety, and deepfakes. Tennessee’s new law includes a private right of action. With 35+ states pushing AI bills and no federal law in sight, the compliance burden is real and growing. If you advise businesses that build or use AI, Monday morning starts now.

You know that feeling when a client calls and says, “Hey, I just heard about this new law, should we be worried?” And you think: which one?

That’s where we are with state AI regulation. Not next year. Not “on the horizon.” Right now. In a single week straddling March and April 2026, five states moved significant AI legislation forward. Tennessee signed a bill into law. Georgia passed three. Idaho sent four to the governor. South Carolina approved a social media safety act 114-0 in the House. And Nebraska, in maybe the most creative legislative move I’ve seen this year, quietly attached chatbot safety rules to an agricultural privacy bill.

I want to walk you through what actually happened and why it matters. But I also want to be honest about something: the patchwork problem that everyone’s been warning about? It’s not a warning anymore. It’s Tuesday.

Tennessee: AI Can’t Pretend to Be Your Therapist

Governor Bill Lee signed SB 1580 on April 1. The law itself is short, less than a page. But don’t let that fool you.

If you develop or deploy an AI system, you cannot advertise or represent that the system is, or can act as, a qualified mental health professional. One rule. Clear as day. Backed by $5,000 per violation under Tennessee’s Consumer Protection Act.

And here’s the part that matters most: the law includes a private right of action. Individuals can sue directly. They don’t need to wait for the attorney general to get around to it.

The bill passed the Senate 32-0. The House 94-0. When’s the last time you saw a legislature agree on anything unanimously? That tells you something about where the political energy is on this issue.

Now, the sponsor was careful to say that mental health professionals can still use AI as a tool. Nobody’s banning therapists from using technology. What they’re banning is the AI pretending to be the therapist. That’s the line.

Think about what falls near that line, though. AI wellness apps. Chatbots offering emotional support. Telehealth platforms where the AI generates the responses and a human maybe reviews them later, maybe doesn’t. If your client is anywhere in that neighborhood, they need to look hard at how they’re marketing those products. Because “AI-assisted care” and “AI acting as your therapist” just became a legal distinction with real money attached to it.

California already has companion bills in play. This kind of law is going to spread.

Georgia: Three Bills, One Week, One Clear Direction

Georgia’s legislature wrapped its session by sending three AI bills to the governor, and each one is aimed at a different problem.

SB 540 deals with companion chatbots, the ones designed to simulate personal relationships. Sometimes romantic ones. The bill says these chatbots have to remind users every three hours that they’re talking to a machine. For kids, that reminder comes every hour. It also includes protocols for when a user expresses thoughts of self-harm or suicide.

The insurance bill is more straightforward but just as important. When a patient’s medical procedure gets denied coverage, a human has to make that call. Not AI. Not an algorithm. A human with the authority to override. This isn’t coming out of nowhere. The American Medical Association found that 61% of physicians worry that unregulated AI in insurance will increase denials. Georgia legislators listened.

The third bill creates a study committee. I know, I know. Study committees can be where ideas go to die. But this one’s different. Georgia Republicans went out of their way to check with the Trump administration before moving these bills, making sure they wouldn’t trigger federal pushback. They even built in delayed effective dates to give Congress room to act first.

One Georgia lawmaker put it perfectly: “We shouldn’t have a patchwork of 50 different rules. But we can’t expect that Congress will move as expeditiously as we would like them to.”

Put that on a poster.

Idaho: The Surprise Package

Idaho. Not the first state that comes to mind when you think about tech regulation. But they advanced four AI bills in a single week, and Governor Little already signed the education bill.

SB 1227 tells the state Department of Education to build a statewide framework for generative AI in schools. AI literacy standards for K-12 students. Policies every school district has to adopt. And an explicit line in the law saying AI tools cannot replace human teachers. They also announced a partnership with Micron and Microsoft to provide AI training for educators at no cost, which is the kind of public-private thing that actually makes a difference when you’re trying to move fast.

SB 1297, the chatbot safety bill, passed both chambers. HB 542 goes after addictive social media features targeting kids. A fourth bill addresses synthetic media and deepfakes.

What caught my eye about Idaho is the range. Education, child safety, chatbots, and deepfakes. All in one session. All with bipartisan support in a deep-red state. If you’re waiting for AI regulation to become a partisan issue that stalls along party lines, Idaho just told you that’s not happening.

South Carolina: 114-0

HB 4591, the Stop Harm From Addictive Social Media Act, passed the South Carolina House without a single dissenting vote. Not one. It now sits with the Senate.

The bill targets platforms pulling in at least $1 billion in annual global revenue. It requires age estimation, parental consent for children under 17, and it forces platforms to disable addictive features for minors: infinite scroll, push notifications, like counts, badges for heavy use. No targeted ads for kids.

Here’s the aggressive part. If a platform lets a child open an account without proper parental consent, the entire user agreement with that child is void. Gone. Including arbitration clauses. Including limitation of liability. As a matter of public policy, not subject to waiver. The bill also creates a private right of action for children and parents covering mental health damages and emotional distress.

South Carolina already signed a separate Social Media Regulation Act back in February. This new bill stacks on top of it. These folks aren’t messing around.

Nebraska: The Trojan Horse

I have to give Nebraska credit for the move here. Senator Eliot Bostar had a Conversational AI Safety Act (LB 1185) that couldn’t build enough momentum on its own. So what did they do? They attached it to the Agricultural Data Privacy Act, a popular bill giving farmers control over their agronomic and livestock data. The combined package cleared first-round debate 35-0.

Nobody’s going to vote against protecting farmers’ data. And now chatbot safety provisions ride along with it.

The AI rules mirror Oregon’s recently enacted chatbot safety law. Disclosure when users interact with AI. For minors: no sexually explicit content, no romantic language, no features simulating emotional dependence. Protocols for identifying self-harm or suicide prompts. The agricultural piece is real too. Starting January 2027, every new contract involving ag data in Nebraska must prohibit selling that data without the producer’s written consent. Violations are $1,000 per incident.

The Patchwork Problem Isn’t Coming. It’s Here.

Here’s the part that should worry you if you’re advising businesses that operate in more than one state. Which is almost everyone.

More than 35 states have active AI bills right now. The Future of Privacy Forum is tracking 98 chatbot-specific bills across 34 states, plus three federal proposals. In 2025 alone, state legislators introduced over 1,100 AI-related bills.

And the definitions don’t line up. What counts as a “chatbot” in Idaho isn’t the same as what counts in Washington. Oregon’s disclosure rules differ from Georgia’s. Tennessee’s private right of action works differently from California’s. A company deploying AI hiring tools across California, Colorado, Illinois, and Texas has to satisfy four different definitions of “high-risk” AI, meet four different audit timelines, follow four different disclosure requirements, and face four different penalty structures.

Most companies try to comply with the strictest version everywhere. That’s expensive. And it still might not be enough, because the strictest version in one category might come from a different state than the strictest version in another.

The Trump administration sees this problem. A December 2025 executive order created a DOJ task force to challenge state AI laws. In March 2026 the White House proposed a single federal standard. But none of that is law yet. Not even close. And until it is, every one of those state laws is fully enforceable.

I keep coming back to what that Georgia lawmaker said. They built in delayed effective dates because they want Congress to act. But they went ahead and passed the bills anyway, because they know Congress probably won’t. This is exactly how state privacy law played out. Only faster.

What to Do Monday Morning

Map your exposure. If you or your clients build, deploy, or advise on AI tools that touch mental health, insurance, chatbots, or anything child-facing, you need a state-by-state picture of where those products are available and what laws apply. Not next quarter. This week.

Follow the private right of action. Tennessee, South Carolina, California, New Hampshire, and others are creating direct paths for individuals to sue over AI harms. For law firms, this is a new practice area forming right in front of you. For companies, it changes the math on what “good enough” compliance looks like.

Stop waiting for federal preemption to save you. The executive order signals intent, but the legal fights will take years. Every company that delays compliance is betting on a timeline they don’t control. That’s a bad bet.

The common threads across all five states are hard to miss: protect kids, tell people when they’re talking to AI, keep humans in charge of healthcare decisions, and hold companies accountable when AI causes harm. None of that is radical. It’s the floor.

And the floor is rising fast.

If you read this far, you’re not someone who’s going to get caught flat-footed when a client calls about a state law you’ve never heard of. You’re the person trying to figure out how to build an advisory practice around something that’s moving faster than the bar associations can publish guidance on.

That’s the conversation I have every day with managing partners and practice leaders who are watching this space and trying to decide what’s real and what’s noise. If you’re working through how to map your firm’s exposure or how to talk to clients about multi-state AI compliance, send me a note at steve@intelligencebyintent.com. Tell me what you’re seeing. I’ll tell you what I’m seeing, straight, including what’s settled and what’s still a mess.