Two AIs. One Credit Agreement. Both Caught the Default You'd Miss.

A senior associate would burn three days on this. Both models finished in twenty minutes. One built a better model, the other wrote a better memo, and both flagged a day-one default.

Two AI Models. One Credit Agreement. 20 Minutes Each.

TL;DR

I gave a CLE at the Beverly Hills Bar Association this past Wednesday walking attorneys through ten AI-assisted workflows across the transactional arc. The one that got the loudest reaction was the loan covenant analysis. For the CLE session I ran the demo with Claude Opus 4.7 on adaptive thinking. The next day, OpenAI dropped the latest version of ChatGPT (GPT-5.5). I decided to run the same analysis in their new Codex tool with the new GPT-5.5 on xhigh thinking so I could compare results. Each took about twenty minutes. Both produced partner-ready work product. Both caught the day-one default. GPT-5.5 built the deeper spreadsheet. Opus wrote the better Word doc. Different brains for different jobs, and either one beats the three full days a senior associate would have burned on the same task.

The moment every finance partner recognizes

Every finance partner has had this moment. A senior associate hands in a covenant memo. The legal reasoning is solid. The math doesn’t tie. Or the math is right and the defined terms got mangled. Almost no junior lawyer is genuinely strong at both halves of credit work, so partners either do it themselves or run shuttle diplomacy between an associate and a banker until the numbers reconcile.

That’s the bottleneck I wanted to test.

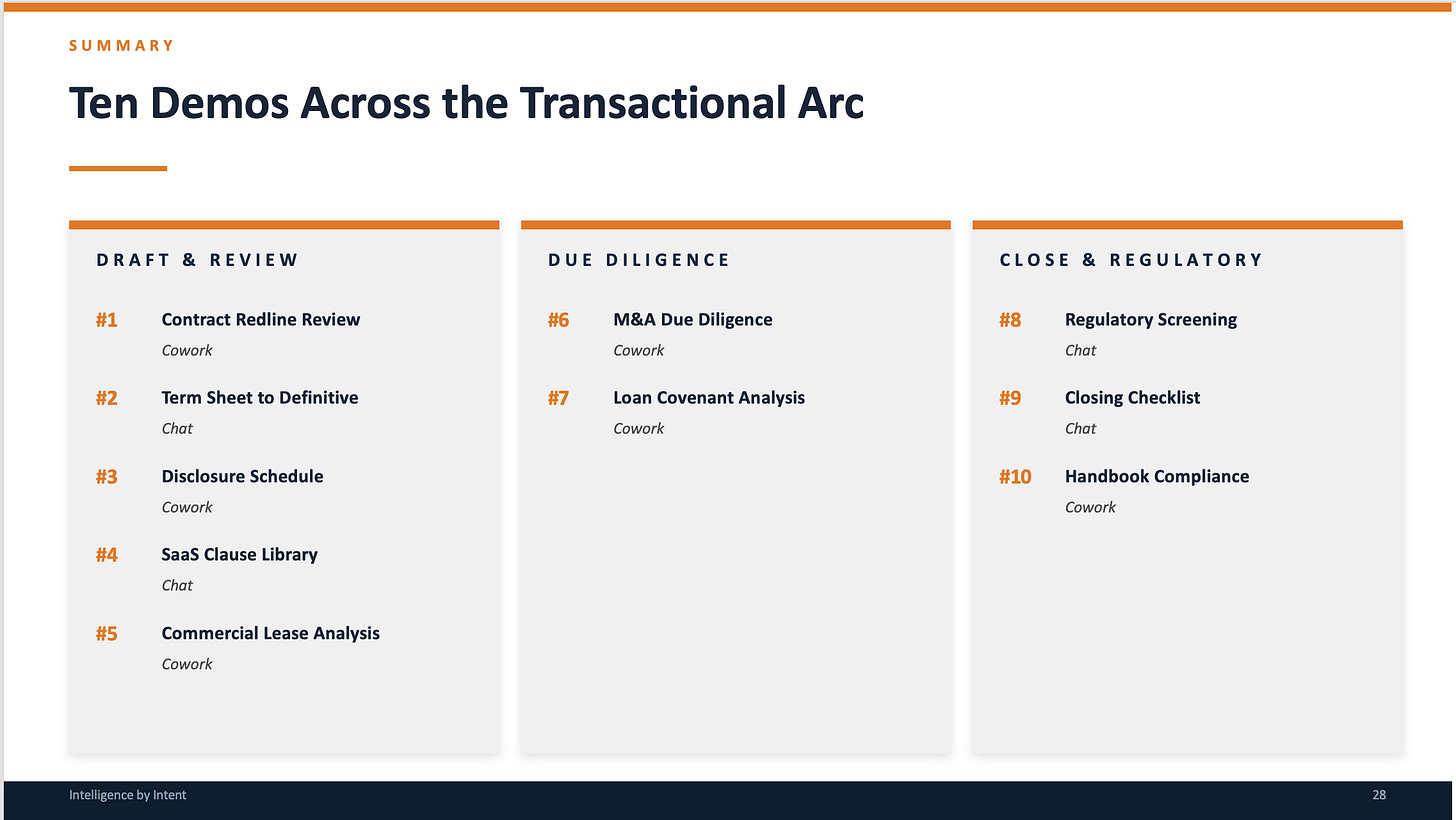

What I showed at the BHBA

The full CLE covered ten transactional workflows across drafting, diligence, and close. Contract redline review on a heavily marked-up MSA. Term sheet to first-draft definitive agreement. Disclosure schedule drafting. SaaS clause libraries. Commercial lease review. Full M&A diligence. Regulatory screening. Closing checklist with dependency mapping. Employment handbook compliance audit. And the one I want to dig into here: loan covenant analysis.

The covenant analysis is the demo that most obviously needs both halves of the brain. Read a 50-page credit agreement, parse defined terms across cross-references, then build the EBITDA bridge and run the math against quarterly financials. It’s the work senior associates either avoid or do badly.

The setup

For the demo I built a sample senior secured credit agreement. $175M Term Loan B, $40M revolver, sponsor-backed industrial manufacturer eighteen months past LBO. Four maintenance covenants. Net leverage stepping down from 5.50x to 4.00x by 2029. Fixed charge coverage of 1.15x. Liquidity floor of $12M. Capex cap of $8.5M. The Adjusted EBITDA definition included all the add-backs that show up in real sponsor-backed deals: restructuring up to $5M, sponsor management fees capped at $2.5M annually, run-rate savings capped at 25% of EBITDA. I built a full year of quarterly financials with messy line items, plus a $5M subordinated seller note with its own tighter senior leverage covenant.

Then I asked both models to produce the same deliverable. Full covenant analysis. Current compliance shown step by step. Headroom by covenant. A 15% revenue stress case. Conflicts with existing debt. Market commentary calibrated to a sponsor-backed mid-market credit. A covenant dashboard. Prioritized markup list with draft language. And a CFO compliance certificate template.

A senior associate would burn three full days on this. Each model finished in about twenty minutes.

What both models surfaced

This is the part that should change how you think about the work.

Both models read the EBITDA definition, walked the add-backs through the caps, and built the bridge from a $4.8M net loss to $29.2M of Adjusted EBITDA. Both pulled Consolidated Total Debt to $186.6M after the $15M cash netting cap. Both calculated net leverage at 6.39x against the 5.50x covenant. Both calculated FCCR at 1.10x against the 1.15x minimum. The deal doesn’t close as drafted. Day-one breach on two covenants, on the actual numbers I gave them.

Both models also flagged what is, in my view, the more dangerous problem: the subordinated seller note’s senior net leverage covenant is set at 4.75x, and the borrower currently sits at 7.72x. Already in default. The new credit agreement’s $2M cross-default threshold catches that note. Unless the seller note is waived or amended at closing and a cross-default carveout is added, the new facility inherits the existing default the day it funds.

Both models flagged the absence of an equity cure right, which is unusual for a sponsor-backed mid-market deal in 2026 and matters because the stress case shows the borrower would need $14M of incremental EBITDA support to stay compliant under a 15% revenue decline. Without a cure, that’s an amendment-and-forbearance conversation, not a sponsor wire transfer.

Both flagged the capex covenant as technically passing but operationally constraining: $7.8M of FY2025 capex against the $8.5M cap is only an 8.2% cushion, and maintenance capex alone is $4.2M. Both noted that the agreement uses an undefined term, “Total Net Funded Indebtedness,” in the incremental debt covenant.

This isn’t pattern matching. This is reading.

Different strengths

GPT-5.5 built the deeper spreadsheet. Thirteen linked tabs. Dashboard, Assumptions, Source Financials, EBITDA Build, Debt and FCCR, Covenants, Stress Model, Sensitivities, Basket Capacity, Reporting, Negotiation, Checks, Sources. Color-coded inputs in blue, formulas in black, cross-sheet links in green. The dashboard pulls live from the covenant tab, which pulls from the EBITDA build, which pulls from the source financials. Drop in a different revenue assumption and the whole stress case rebuilds. The sensitivity tab feeds a chart on the dashboard. This is what a senior banking analyst would build. It’s an actual model, not a report dressed up in spreadsheet form.

Opus 4.7 wrote the better Word doc. The covenant package summary read more lawyerly. The prioritized negotiation list was easier to walk a partner through, and the proposed markup language was tighter and closer to drop-in ready. The cross-checks section caught the same drafting issues but framed them in the order a finance attorney would actually raise them with the agent.

Different brains for different jobs. If I were prepping for the markup call, I’d take Opus’s narrative analysis into the partner meeting and GPT-5.5’s spreadsheet into the call with the financial advisor. The right answer here is to use both models on the same matter and let each do what it does best.

The thing partners ask about first

You should not take any of this output to a client or counterparty without reviewing every number and every defined term. Rule 1.1 competence applies. AI doesn’t have a bar number. You do. The work still needs you. What changes is the first 80%, the part that used to eat three days, now gets done over lunch. You spend your time on the part where judgment actually matters.

Two more things partners ask about. First, plan tier matters. Free and Pro tiers on either platform are consumer-grade and not appropriate for client-confidential work. Use Claude Team or Enterprise. Use ChatGPT Business or Enterprise. If you can’t tell the client where the data sits and who can see it, the data does not go there.

Second, both models will hallucinate a defined-term cross-reference if you don’t give them the actual definitions. I gave both the full definitions section. Skip that step and the output is wrong in ways that look right.

What to do Monday morning

Pick a credit agreement currently on your desk and the borrower’s last four quarters of financials.

Run the covenant analysis through your firm’s approved AI platform on a Team or Enterprise tier, and check the EBITDA bridge against your own model. That’s where errors hide.

Compare the AI’s prioritized markup list to the one you would have built yourself. The differences are blind spots, in either direction.

That last step is the one that will change how you staff your next deal.

The bigger point

A year ago, neither model could have done this. Now both can, and they do it in twenty minutes. One of them quietly built a better financial model than most associates would. The other wrote a better legal memo than most associates would. The cost curve for this work just dropped through the floor.

I have shared the full outputs from GPT-5.5 in a Google Drive folder linked here so you can see what it’s creating these days.

Open the spreadsheet. Imagine the scenario. Then ask yourself how many hours your team billed last quarter on work two machines could do between meetings.

The point of this exercise isn't that AI replaces credit lawyers. It's that the part of the work that used to eat the calendar, reading defined terms, building the EBITDA bridge, running the stress case, has collapsed to twenty minutes per pass. What's left is judgment, and that's the part you were always supposed to be doing anyway. If you want to talk through how this would run on a deal currently sitting on your desk, send me a note at steve@intelligencebyintent.com. The firms figuring this out now will be the ones partners want to be at when the next downturn rewrites every credit agreement in the market.